Instagram’s Algorithm Was Funneling Children to Groomers

Shocking revelations in another Meta lawsuit, plus a debate I did on Section 230.

Here’s the most infuriating thing I read in the last week (a high bar these days!): it was a statistic in a story Michael Scherer and Kaitlyn Tiffany wrote for The Atlantic about internal Meta documents. These documents came out in a New Mexico trial, where the state’s attorney general accuses Meta of becoming a “breeding ground” for child sexual exploitation. His office actually ran a sting operation showing how easy it was for potential abusers to connect with children on Meta’s platforms.

Evidence from the trial revealed that Meta knew Instagram’s algorithm was recommending nearly four times as many minors to accounts flagged for “groomer-esque” behaviors (adults following teen hashtags, or so-called “sexy teen accounts”) than it did to ordinary adult users. According to internal documents, 27 percent of the accounts recommended to these likely groomers were minors, as opposed to 7 percent for regular adults.

I found this enraging for a bunch of reasons. First, according to The Atlantic reporters, Instagram didn’t lock down accounts for its youngest users until years after learning these statistics. But also: there’s an argument that algorithms are a form of protected speech, and that regulating them, or carving them out from Section 230’s immunity, would violate the First Amendment. An algorithmic recommendation, the argument goes, is just a machine’s – or a company’s – opinion about what a user might like to see. Under that logic, the government can’t squash it.

Really? Is a machine-learning algorithm that connects children to potential sex abusers First Amendment-protected speech? I love the First Amendment. But I don’t think so.

This is the thing I tried to hammer home in a debate I was part of yesterday on the YouTube/radio show, The Majority Report (you can watch it below). Hosts Sam Seder and Emma Vigeland had me on with a critic of mine: longtime tech writer Mike Masnick. He founded the blog, TechDirt, created his own podcast about Section 230, and is a big defender of the law.

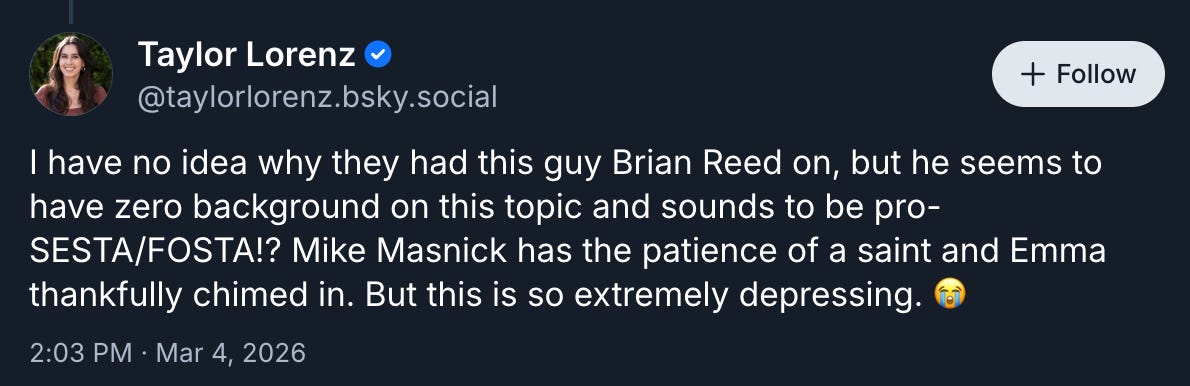

When we first set our sights on Section 230 here at Question Everything, Mike came out with a pretty tough rebuttal of our first episode about it. He basically claimed I didn’t understand the law. I find this to be a somewhat common refrain among Section 230 fans – they tend to respond to criticism of the statute by saying the person criticizing it just doesn’t understand it. Tech reporter Taylor Lorenz is another Section 230 defender who did this to me yesterday:

I’m not sure what the whole “This Brian Reed guy” thing is about: Taylor knows me, I’ve interviewed her for another story (it hasn’t run yet), and we’ve emailed about Section 230. But anyway… As I responded to Taylor, it is possible to understand Section 230, care a ton about the First Amendment (as I do), and still believe Section 230 should be revisited.

It’s not as if once we all reach some immaculate understanding of Section 230, we will see that it can never be criticized or re-envisioned. Even the most OG Section 230 expert and booster, Eric Goldman of the High Tech Law Institute, calls Section 230 the “least worst” option. There are tons of downsides to Section 230, and it seems crazy not to dig into them and ask whether there might be a better framework for the modern internet.

So I’m glad that is what we did, pretty substantively, on The Majority Report.

You should watch if you’re interested. The big point I was trying to make: Section 230 is supposed to be a “Good Samaritan” statute. That’s actually in the text of Section 230. Internet service providers – apps, platforms, blogs, etc – are shielded from lawsuits over stuff users post on their site, even if those platforms moderate, block, and or amplify that content. The law is supposed to encourage good behavior from companies, creating a positive experience for users.

But it doesn’t require it. Companies get the immunity whether they’re good Samaritans or not. And I believe the evidence shows that the most powerful companies in tech have not lived up to their end of the bargain. They have not proven to be good Samaritans. The revelations about Instagram feeding kids’ accounts to potential groomers are just the latest awful example. Yet Meta and other tech companies have profited mightily from the liability shield they get. That’s why the law warrants another look.

For Mike’s part, I thought the most compelling points he made had to do with the Computer Fraud and Abuse Act, which he believes is the main culprit for why the Internet sucks today (and not Section 230). And also about the effect copyright takedown requests have had on competition in the video streaming world – why, really, we only have YouTube.

As always, let me know what you think, what questions you have, what points you think I’m missing. And if you have tips – write me here, or on Signal at brihreed.45

Brian

Thank you for addressing this issue

I have a hard time understanding how the broad lack of accountability continues to make sense as we are yet to see positive adaptations given the ongoing presentation of data indicating the harm of social media platforms owned by meta

No idea what repeal would do? Sure, maybe exposing millions of videos and posts will make everyone MORE willing to host controversial content. Brilliant dodge there, Brian.

I'm tired of talking in abstractions and ideals. I need to explain what your Better World looks like for me. Then I have a simple question.

In my time, I have tackled many types of projects in written, audio and video form. This has included novels, short stories, feature writing, reviews, essays and documentary. All of this I've had to do on my own, as the gatekeepers in your world have rejected my work thousands of times.

My work isn't commercial. Often, I'm the lone dissenting voice on a topic. As such, in the event that something makes me any kind of liability to the people who host my work, that work will vanish. I will fall silent.

There is one area where this particularly upsets me. I have lived in China for many years, and the Western narrative on the country is a disgrace. Not to put too fine a point on it, but the output I've seen is orientalist garbage. Writing about the country (and this includes everything I've seen from NPR) trends toward describing the Chinese as benighted peasants who don't understand their own culture and need noble, superior Americans and Europeans to save them.

It is a topic area where the press showcases no dissenting voices at all. I know this, because for all the years I've written about China, I've been quoted exactly once. It was in the New York Times and it was a rare time when I wrote something that was purely negative. Anything with the tiniest bit of nuance and I get dismissed as a liar or a dupe. There's no room for my experiences, my thoughts.

It's all well and good to defend 1A in some hypothetical sense, but if you get your way, it will destroy the only means by which I can actually exercise that right. My voice vanishes from the conversation and an entire, major topic of debate is narrowed to a single acceptable perspective.

So the question: Is that a better world?